8 minute read; 2 minutes for the Overview and bold text.

Overview

In Hi-Fi speakers “Quality is in the mid-range”; in investing “It’s never as good or bad as it seems”; in Buddhism “Avoid extremes; follow the Middle Way.” So too, LLMs occupy a nuanced middle between hype and dashed hopes. This post reveals two axioms that recenter LLMs on a table around which managers can draft predictable ROI from LLM deployments. The Quality–Scope Decision Matrix halts the epidemic of time wasted on LLM uses that fail to deliver as hoped.

I post rarely and only when your reading each word delivers transformative value. Still, if you don’t have 8 minutes to read it all, plus 3 to grasp the Decision Matrix below, here’s the 30-second gist:

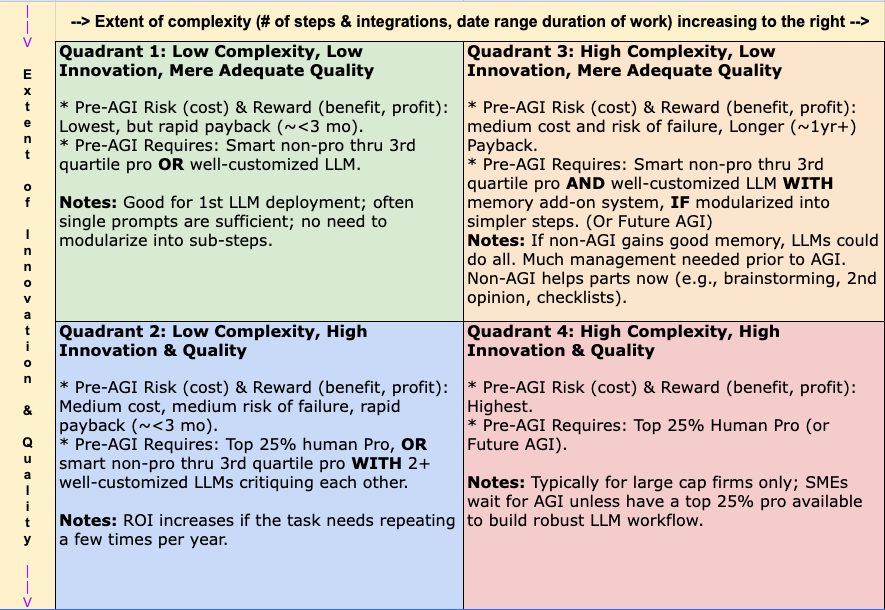

Recent AI market volatility and “Model Collapse” warnings obscure a critical reality: LLMs offer SMEs predictable ROI only if managers delegate by required quality/innovation and project complexity. Introducing the Quality–Scope Decision Matrix for LLM Deployment, this post shows a Middle Way that balances transformers’ inherent averaging and memory problems with the ROI of compensatory tactics built into workflows. By distinguishing between “Adequacy Only” tasks and high risk-reward “Pro” requirements, we reveal an Efficient Frontier for SMEs. Centered on a new decision matrix, this guide provides a roadmap for integrating LLMs’ Ferrari engines into your corporate chassis today while steering toward the imminent AGI ramp. Learn to identify worthy small-scope deployments that pay back within three months while building expertise and components for complex pro-level projects both before and after AGI. While competitors spin their wheels, your LLM workflows chosen for dependable ROI become assets that pay dividends and pave your AGI on-ramp. The Quality–Scope Decision Matrix realizes DISC’s principle that “Innovation Efficiently Integrated Is Integrity Proven by Results.”

Digesting the ~2-3 hours of linked sources will greatly sharpen your 360 vision surrounding this post.

Clarifying AGI and its Birthday

Let’s leave aside whether AI stock prices and projected returns on capital show a bubble, and instead focus on business efficiencies in SMEs‘ deployment of LLMs. In contrast, enterprise deployments are greatly augmented with dedicated compute, proprietary data, persistent memory, and pre-LLM automation checkpoints, so operate in a separate league. These layers of augmentation within and around enterprise LLM stacks produce what looks like AGI now, but it’s not precisely because so much pro labor goes into the augmentation. AI/LLM’s impact on employment emerges from the economies of scale wherein expensive human engineering is repaid by numerous job terminations. However, AGI, as I define it below and as most people imagine it, would not require such enterprise-grade augmentations.

In my September 2023 blog post, LLMs agreed with my thesis that “autonomous synergies [essentially AGI] won’t happen for 3 years, maybe 5.” Definitions of AGI writhe like a Klingon delicacy, so let’s cook it down to a sensible middle: AGI is here when LLMs can (1) autonomously perform most deskwork as well as 75% of professionals, (2) with at most 75% of pre-LLM cost, and (3) this capability is proven by an ample variety of firms.

Recent news makes Q4 2027 to Q4 2028 the likely birthday of this AG. (4-5 years since my 2023 “3 to 5.”) A compressed summary of my years and countless hours of R&D backing this projection would fill pages, so for this and other axioms below I provide only a few primer sources. The first two below are required for anyone navigating the emergent LLM workforce:

- The super-viral article, Something Big Is Happening, 2/9/26, 15-minute read time.

- The January ’26 Phase Change, early February 2026, 24-minute video.

Sure, good AI pros can make agentic workflows that perform close enough to top pro quality today, but the goal–or threat–is that any non-IT manager could verbally convey objectives to an LLM–just like for a human pro–so that with minimal supervision the LLM implements all well. One of countless proofs that we aren’t at AGI now is that my firm’s well-tuned LLMs could not handle autonomous production of the core Quality–Scope Decision Matrix below. The Matrix’s twin axioms operate by an algorithmic logic that mere pattern matching could not represent without a human pro’s handholding, and I surmise that such failures greatly outnumber the selective cases of success.

Understanding AGI for SMEs

Current and future ROI from LLMs requires (1) discerning two kinds of deployment and two levels of quality needed, then (2) applying to that matrix an understanding of (a) the memory problem at the core of LLM tech and (b) LLMs’ convincing use of averaging to cover their lack of algorithmic deductive reasoning. We’ll examine each of these parts, and then put them together in a decision matrix you can use, with your LLM’s help, to achieve measurable LLM success.

The Averaging and Memory Problems

Here’s an excellent primer: Model Collapse Ends AI Hype. Several hours of studying how transformers work can reveal that these two weaknesses are inherent and inextricable, though for best results they can and must be managed within presiding workflows.

- LLMs Average Pro Knowledge: LLMs cannot reliably use deductive reasoning to apply long-learned professional concepts to unique situations. While there are compelling hints that LLMs can abstract from a pattern in one domain something like a principle that is later applied to a new problem (innovation), the evidence is anecdotal and analogical, not systematic. And rare occurrences would be far from dependable. Although average pro knowledge is often “good enough for government work,” often it isn’t.

- Noteworthy but beyond our focus here is the fascinating theory that the quality of LLMs’ output will decline as it averages ever more public content that itself consists of prior LLM averaging. Read LLMs’ terms of service closely, for one solution to this “dead internet” of hallow averages is using clients’ valuable documents to inject new life.

- Insufficient Medium- and Long-term Memory. The “hyperscalers,” like ChatGPT and Gemini, purport project and account memory, but it’s spotty, omitting crucial points and work milestones that a human pro must and would remember. A few good semi-automatic memory solutions exist now; LLMs’ ongoing management roles require at least one of them. These solutions put the capstone on the essential structure of context (data, brand briefs, goals, etc,) by which one informs any kind of pro. This guru explains beautifully: My AI Workflow. My firm has been using a simple system integrated with Google Drive. Memory that evolves as weeks and months of work shift tasks and priorities is a must, yet it’s porous and fleeting in LLM’s current core tech. AGI depends on fixing this lack.

The Two Levels of Quality Needed

- Adequacy Only: Very often managers (and home users) can be fully satisfied by mere adequacy, and don’t need top pro quality. For example, finding weaknesses in a lease or car insurance contract, troubleshooting a network outage, routine legal procedures, a summary of current treatment options--think of how often a merely mediocre pro’s guidance is all you need. LLM’s deliver stellar value in these areas.

- A Top 25%+ Pro Required: Complex high cost (risk) and high return (benefit, profit) jobs exceed what LLMs can deliver dependably. Shoving aside the jubilant mob of YouTubers, and reading trenchant studies on LLM’s fundamental lack of true deductive reasoning, reveals that not only is such quality unavailable now, but it may never be within the 3 to 5 year depreciation cycle to which current transformers (like Nvidia chips) are bound. Sound studies reveal that the apparent reasoning steps in Chain of Thought (CoT) methods are actually derivative statistical constructs, not algorithmic logic that innovates like very good human pros.

The Two Kinds of Deployment

- Customized LLMs (GEMs, “Projects”) that meet small-scope, one-off needs: Examples include paralegal work, single contract reviews, complex yet common purchase decisions, and intro training in most fields. YouTubers splattering spaghetti on the wall for their own lead gen provide plenty of examples of such successful use cases. For these tasks, LLMs typically repay all money and time invested within 3 months if not immediately. Repeats of such tasks increase the ROI dramatically.

- Customized LLMs that handle all parts of major projects: Examples include website design/build, or year-end CPA filings, or building a complete automated R&D system. Don’t believe the YouTubers with contorted faces that declare this is doable now without skilled, resource-heavy set up. (I think a YouTube channel focusing on all the things LLMs can’t do would win big–if Google’s YouTube doesn’t suppress it).

The Quality–Scope Decision Matrix for LLM Deployment

Here, the above axioms are distilled into a matrix, like the Eisenhower and Urgent vs. Important matrices, for choosing what to delegate to an LLM “employee.”

Pointers:

- This decision matrix requires a few minutes to grasp; it will save your team tons of time deploying LLMs for competitive advantage.

- When AGI arrives, it will take another 1 to 3 years for it to transform the majority of business. Firms that have deployed LLMs prior to AGI will be primed to benefit from this new LLM power, conferring a huge competitive advantage.

- The bigger the firm, the greater the percent of projects that tend to appear in quadrants 3 and 4.

- You should add this whole post and its matrix to your LLM’s Knowledge Base to help discover your unique “Efficient Frontier” in LLM deployment. (I thought about vide-coding an app that helps people use this tool, but as of January 2026, LLMs properly setup will guide effective use.)

- Assumes competent prompting and context (Knowledge Base & Custom Instructions).

- You’ll know whether you understand this matrix if you can see why the arrival of AGI will shift more work at more kinds of firms into quadrants 3 and 4. (True, you’re LLM could explain why, but this is a concept that managers should comprehend.)

The Quality Scope Decision Matrix, for LLM Deployment

(Left axis, “Extent of Innovation & Quality,” increases downward.)

Contact DISC for a free Excel or Sheet version of this matrix. Your team, including your LLM, can situate your projects in it to prioritize by ROI.

Appendix: The Only Other Guidelines You Need

Only guidelines that LLMs are unlikely to give you are worth gathering. LLMs are superb for customizing free intro to intermediate training programs, which means that anybody selling ongoing AI/LLM training lacks smarts or integrity or both. However, recall that LLMs’ averaging of pro content tends to omit rare excellence, making this list valuable. Furthermore, it stands to reason that financial incentives across the web may suppress guidelines that could reduce revenue to (a) hyperscalers like Google’s Gemini and (b) platforms that sell advertising to the Magnificent Seven, their legion subdivisions and partners, and other large firms.

I use DISC’s standard P1 though P5 designations to assign importance from highest to almost negligible.

- P1 – When AGI arrives, it will take another 1 to 3 years for it to transform the majority of business. Firms that have deployed LLMs prior to AGI will be primed to benefit from this new LLM power, conferring a huge competitive advantage.

- P1 – It’s crucial to distinguish LLM IQ from effective workflow integrations. As one LLM guru said 2 years ago, even an old 2023 model is like giving a Ferrari engine to a 1920s car maker: driveable benefits require integrating the power within the corporate chassis–its workflow, offerings, and marketing. For an excellent elucidation of integration bottlenecks, see this 2/27/26 video (32 minutes). Your take-away: With LLM IQs now high, your building context and evolving memory in your LLM “executives” is the primary determinant of successful deployment, more so than LLM benchmarks.

- P2 – Your LLM’s Custom Instructions should require output to contain a “Contrarian Review” toward the end. This helps prevent hallucinations and the LLM’s tendency to elaborate on one pattern at the expense of counterbalancing perspectives. This final section will often reveal crucial weaknesses in the prior output which are often caused by prompts that stack the deck in favor of prompter bias. Here’s an example Contrarian Review in one of DISC’s custom LLMs, pertinent to this blog post:

- “Your framework may underestimate how rapidly hybrid systems could narrow the gap between “average pro” and “top-20% pro,” especially in structured domains where excellence depends on disciplined workflows. Pure next-token transformers lack intrinsic deductive guarantees, so vanilla stateless models alone are unlikely to reach consistent elite dependability. But the transformer backbone will likely persist while being augmented by memory, recurrent mechanisms, retrieval, and verification layers. In such system-engineered AI stacks, standalone LLMs may remain insufficient, yet increasingly automated workflows could approximate high-level professional performance even as full autonomous replacement remains premature.”

- P2 – Optimizing your Cloud for AI/LLMs (as in DISC’s OCA package) remains your foundational step one. You’ll discover (or your LLM will tell you) why, but in short, smart management of memory and workflow is enabled by a well-organized file system.

- P2 – Optimizing your use of the Quality-Scope Decision Matrix and all phases of LLM deployments benefits greatly from sharing experiences with peers, even with competitors. For example, the principle that open-source sharing lifts all boats underlies DISC’s SALI, which are Seminars for the Agency LLM Initiative” (ALI) and for ALI partners like LivingLocal413.org.

- See The Few & Only LLM Guidelines You Need for the complete list.

Sources

- This post was entirely written by DISC’s CEO Rob Laporte, though proofreading, some fact checking, and the Contrarian Review was done by DISC’s custom LLMs.

- The videos and articles linked above are among the very best I’ve encountered–highly recommended. Some links to videos replace zinger titles with my descriptive names.

- The prior 6-post series on LLMs for business remain valid and sound today, testifying to my semi-idiot, semi savant capacities.